The Double-Edged AI Sword

- tags

- #Security #Pentesting

- categories

- Security Pentesting

- published

- reading time

- 7 minutes

AI is the hot new thing to talk about, fueling public uncertainty and debate. This post discusses the convuluted journey that lead to me developing my own take on it.

Preface

Open Linkedin and tell me: what do you see? If your feed is anything like mine, filled with posts from people who I happened to have met once at a conference and never again, odds are high that without even moving your finger to scroll, you’ll see a post vigorously proclaiming the virtues or demerits of AI within the world of software and security.

Some preach it as the next big revolution, reducing the need for programmers down to near zero. Others claim it is of no use whatsoever. Nobody is sure, everyone is guessing, and whilst the ‘visionaries’ of today have their own strong convictions, many sit on the sidelines - unsure about what the future may hold, and cautious to jump into the deep end that is this AI hype bubble.

You may at this point shrink your nose at the fact that I’ve called the current state of events an AI hype bubble. However, I never promised to be an impartial party.

Instead, I find myself to be one of those in the middle, often trying to figure out where the IT industry is going, and debating with myself what AI means to my future in the security and software industry. Recently, however, I ended up falling down an interesting rabbithole of sorts inadvertently, which has left me perhaps a little more enlightened than before.

Vulnerability Research

What I am referring to is a recent vulnerability which I found and disclosed. The company in question whose product I tested will remain unnamed as it suffices to know that they’re a young tech startup (which are kind enough to listen to a weekend-hacker, and smart enough to fix disclosed issues) with a very interesting product, big goals, and a small IT team.

This mix of big goals and a small IT team has naturally led them to develop (successfully) a number of websites using AI, more specifically Lovable. So, you may be thinking, if a tech startup can create a product by employing a minimal number of IT workers, that’s minus one to the programmer, plus one to our future AI overlords, right? Wellll, not quite.

To understand why, it’s important to understand the vulnerability itself. And to do that, you’ll have to bear with me for a bit.

Recon

Essentially, this issue was discovered first through a subdomain of company.com. The site presents a login page for a web management portal where, if we enter an email and password and click on ‘Sign in’, we get a 400 response error from $projectID$.supabase.co if the wrong credentials are used.

Knowing that Supabase is used as the database system, I got curious and decided to have a quick look at their developer

documentation1. Interestingly, it shows that Supabase by default provides a RESTful API for each database at https://$projectID$.supabase.co/rest/v1/.

So far no problems then (except for the perhaps questionable decision to have the database client talk directly to the user, as opposed to going through some form of middleware).

However, as found here, Supabase states that:

“Your database’s auto-generated Data API exposes the public schema by default”.

This means that by default, the schema for a database is visible to anyone who navigates to $projectID$.supabase.co/rest/v1. The only thing we need is the Apikey, which is public (as the API key is for the standard Supabase anon user) and is automatically included in a HTTP header when we click on “Sign in”. In the case of this specific site, that was likewise the case, and sending a GET returned the entire schema (of which a part can be seen in the image below):

Great so now that I know what calls I can make, what those calls return, everything is accessible, right? Well, so far, none of this is an issue by itself - simply knowing there is a database and what calls you can make to a database does not mean it is insecure (it is noteworthy that many modern implementations of GraphQL have introspection disabled by default, whereas Supabase has it enabled by default).

The (Miss-)Configuration

In a (well-configured) setup where database access is meant to be restricted only to authenticated users, this is basically all I’d be able to do as an unauthenticated (anon) user. This is because by default:

- Supabase enforces a security measure known as RLS (see here),

- They state: “Once you have enabled RLS, no data will be accessible via the API when using the public anon key, until you create policies”.

In addition to the security measures provided by default when using Supabase, Lovable has been kind enough to provide their own additional ones (for Supabase specifically) 2 in the form of two automatic scanners:

- RLS analysis: Which “reviews database access policies and row-level security rules”,

- Database security check: Which “Reviews database schema and RLS configuration”.

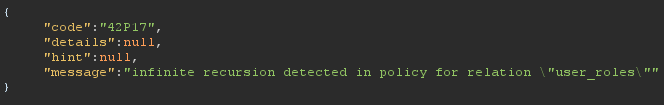

And in fact, this is implemented properly for certain parts of the database. For example if I try send a GET request to $projectID$.supabase.co/rest/v1/specificEndpoint, I get the response error seen in the image below, which indicates that an RLS policy is setup preventing me from querying the data as anon.

However, this was not the case for the entire database. Sending a GET request to several other endpoints returned very specific, complete, and definitly not public information, related the backend operations of the company in question.

Impact

The impact of this is something which, ultimately, I left up to the company to decide. Whilst, as mentioned, I could send GET requests, there were several endpoints which accepted POST, PATCH, and DELETE HTTP methods, which undoubtably could have a SEVERELY larger impact is they would have allowed me to modify, remove from, or add to the database.

Remediation

Frankly, I can’t claim to know exactly why this exact issue existed. However, here’s an educated guess at what happened:

- The endpoints that rejected my requests were the first added to the database, and the RLS (access control) policies set for them blocked access to the

anonuser, - More endpoints were added later. Somehow, maybe on purpose during testing, these endpoints had a different policy set, that approved any request from

anon, - This issue went undetected, and thus the RLS protection was bypassed.

Why This Matters

So, what was the point in me doing this write-up, and how does it tie back into my new thoughts on AI? Well, here’s how I see it:

On one hand, the fact a start-up with a minimal team could setup an entire working backend, frontend, and database system is a true testament to how much AI can do, and how the need for grunt-work development is drastically falling.

On the other hand, the system had pretty serious issues which could have seriously jeopardized the operations of the firm. It is irrelevant (to this context) why they happened, and if it was due to a human, or an AI agent. The more relevant factor is that this was both an easily remediated error, and an easily findable error3.

This, in my eyes, showcases one thing above all else: the rise in AI coding agents is inherently changing the industry and the traditional roles within IT. Just like how the rise in computers meant that the job of traditional bank tellers was changed, and how the rise of the internet meant encyclopedia salesmen were no more, so too is AI changing the traditional role of a software engineer.

In this new age, developers can no longer simply be code monkeys. They need to adapt, and take on responsibilities previously relegated solely to testers, architects, and security engineers. Otherwise, what are we doing if not blindly placing our faith in a computer?

If you’ve never heard of Supabase, it’s (self-titled) “the Postgres development platform”. Ignoring the bold implicit claim of being the one and only Postgres dev platform, it essentially provides an abstraction layer for database development using Postgres. Consider it basically an open-source Firebase alternative. ↩︎

Why is this explicitly something Lovable does, you may ask? Well Lovable has native Supabase integration, which means that most users are pushed to use it specifically. This sort of soft vendor lock-in is something which I believe is also questionable, but I’ll refrain from going into that at this point. ↩︎

After this issue was found and fixed, I took another shot at the system, and managed to actually get access to even the RLS protected functionality by skipping the management system entirely and going directly to the MQTT data streams, but I’ll leave that for a seperate post. ↩︎